MITRE ATLAS™

This section details the MITRE Adversarial Threat Landscape for Artificial-Intelligence Systems (MITRE ATLAS™). [1]

Summary

MITRE ATLAS™ is a publicly accessible, living knowledge base of adversary tactics and techniques based on real-world AI incident observations. As AI systems become more popular, ATLAS provides a much-needed complement to the traditional MITRE ATT&CK® framework, focusing explicitly on the unique vulnerabilities and attack vectors associated with Machine Learning and GenAI.

Tactics

A Tactic represents the “why” of an ATLAS™ technique. It is the adversary’s tactical objective: the reason for performing an action.

MITRE ATLAS™ includes 16 distinct Tactics:

| ID | Title |

|---|---|

| AML.TA0000 | AI Model Access |

| AML.TA0001 | AI Attack Staging |

| AML.TA0002 | Reconnaissance |

| AML.TA0003 | Resource Development |

| AML.TA0004 | Initial Access |

| AML.TA0005 | Execution |

| AML.TA0006 | Persistence |

| AML.TA0007 | Defense Evasion |

| AML.TA0008 | Discovery |

| AML.TA0009 | Collection |

| AML.TA0010 | Exfiltration |

| AML.TA0011 | Impact |

| AML.TA0012 | Privilege Escalation |

| AML.TA0013 | Credential Access |

| AML.TA0014 | Command and Control |

| AML.TA0015 | Lateral Movement |

Techniques

A Technique represents “how” an adversary achieves a tactical goal by performing an action. For instance, Discover AI Model Family (AML.T0014) is a Technique under the Discovery (AML.TA0008) Tactic.

Mitigations

A Mitigation is a security concept or technology that can be implemented to prevent or detect the successful execution of a Technique. For instance, Use Ensemble Methods (AML.M0006) is a Mitigation for Discover AI Model Family (AML.T0014).

It is important to note that a given Mitigation may be applicable to multiple Techniques, and a given Technique may be countered by multiple Mitigations.

MITRE ATLAS™ Matrix

The MITRE ATLAS™ Matrix [2] is a view of the framework that organizes the Tactics and Techniques into a matrix, and may be used to identify and prioritize security controls. Each column is a Tactic, and each row is a Technique. By clicking through a Technique, one is able to access lists of Mitigations and Procedure Examples.

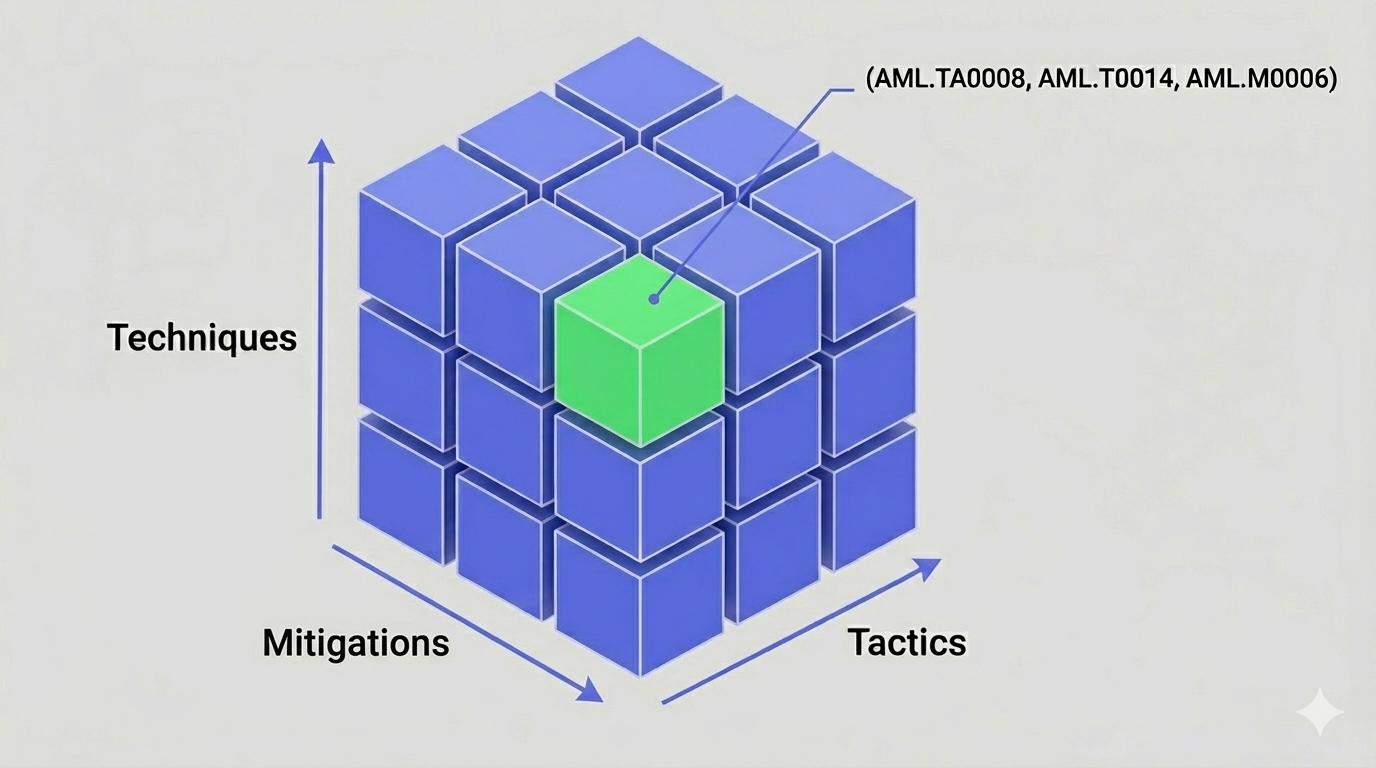

An alternative perspective is to think of the framework as a 3-dimensional matrix combining Tactics (x-axis), Techniques (y-axis), and Mitigations (z-axis). Each element in this matrix forms a tuple of (Tactic, Technique, Mitigation), as seen in Figure 1.

Figure 1: 3D view of the MITRE Atlas™ Matrix.

In Figure 1, an element of the matrix is highlighted, which we are already familiar with. This element is the tuple

(Tactic, Technique, Mitigation) = (AML.TA0008, AML.T0014, AML.M0006)

where

| ID | Title |

|---|---|

| AML.TA0008 | Discovery |

| AML.T0014 | Discover AI Model Family |

| AML.M0006 | Use Ensemble Methods |

Most tuple combinations are nonexistant due to the hierarchical nature of the framework, making the matrix highly sparse. Although imperfect, the 3D perspective is useful to simplify the intricate structure that the navigation of the original MITRE ATLAS™ Matrix offers.

Mapping to SCF C|P-RMM

The mapping of MITRE ATLAS™ Tactics and Techniques to the SCF C|P-RMM framework follows the exact same structure as discussed in the MITRE ATT&CK® section.

The MITRE ATLAS™ Tactic called Impact (AML.TA0011) directly represents the realization of the Risk in SCF C|P-RMM.

Critique

MITRE ATLAS™ is heavily anchored in traditional Machine Learning (ML) attacks, such as Data Poisoning, Model Evasion, and Model Inversion. For organizations building modern applications by strictly wrapping commercial Large Language Model (LLM) APIs, many of these ML-specific techniques are entirely out of scope, relying on the model provider to address them. This can make the framework feel overwhelming or overly complex for teams focused solely on GenAI application security.